Extra knowledge doesn’t imply higher observability

In the event you’re conversant in observability, you recognize most groups have a “knowledge downside.” That’s, observability knowledge has exploded as groups have modernized their utility stacks and embraced microservices architectures.

In the event you had limitless storage, it’d be possible to ingest all of your metrics, occasions, logs, and traces (MELT knowledge) in a centralized observability platform . Nevertheless, that’s merely not the case. As an alternative, groups index massive volumes of information – some parts being repeatedly used and others not. Then, groups should resolve whether or not datasets are price holding or must be discarded altogether.

For the previous few months I’ve been enjoying with a instrument referred to as Edge Delta to see the way it may assist IT and DevOps groups to resolve this downside by offering a brand new solution to accumulate, remodel, and route your knowledge earlier than it’s listed in a downstream platform, like AppDynamics or Cisco Full-Stack Observability.

What’s Edge Delta?

You should use Edge Delta to create observability pipelines or analyze your knowledge from their backend. Sometimes, observability begins by transport all of your uncooked knowledge to central service earlier than you start evaluation. In essence, Edge Delta helps you flip this mannequin on its head. Stated one other approach, Edge Delta analyzes your knowledge because it’s created on the supply. From there, you possibly can create observability pipelines that route processed knowledge and light-weight analytics to your observability platform.

Why may this method be advantageous? In the present day, groups don’t have a ton of readability into their knowledge earlier than it’s ingested in an observability platform. Nor have they got management over how that knowledge is handled or flexibility over the place the info lives.

By pushing knowledge processing upstream, Edge Delta permits a brand new sort of structure the place groups can have…

- Transparency into their knowledge: “How precious is that this dataset, and the way will we use it?”

- Controls to drive usability: “What’s the splendid form of that knowledge?”

- Flexibility to route processed knowledge wherever: “Do we’d like this knowledge in our observability platform for real-time evaluation, or archive storage for compliance?”

The web profit right here is that you just’re allocating your sources in direction of the appropriate knowledge in its optimum form and placement primarily based in your use case.

How I used Edge Delta

Over the previous few weeks, I’ve explored a pair completely different use instances with Edge Delta.

Analyzing NGINX log knowledge from the Edge Delta interface

First, I wished to make use of the Edge Delta console to investigate my log knowledge. To take action, deployed the Edge Delta agent on a Kubernetes cluster working NGINX. From right here, I despatched each legitimate and invalid http requests to generate log knowledge and noticed the output through Edge Delta’s pre-built dashboards.

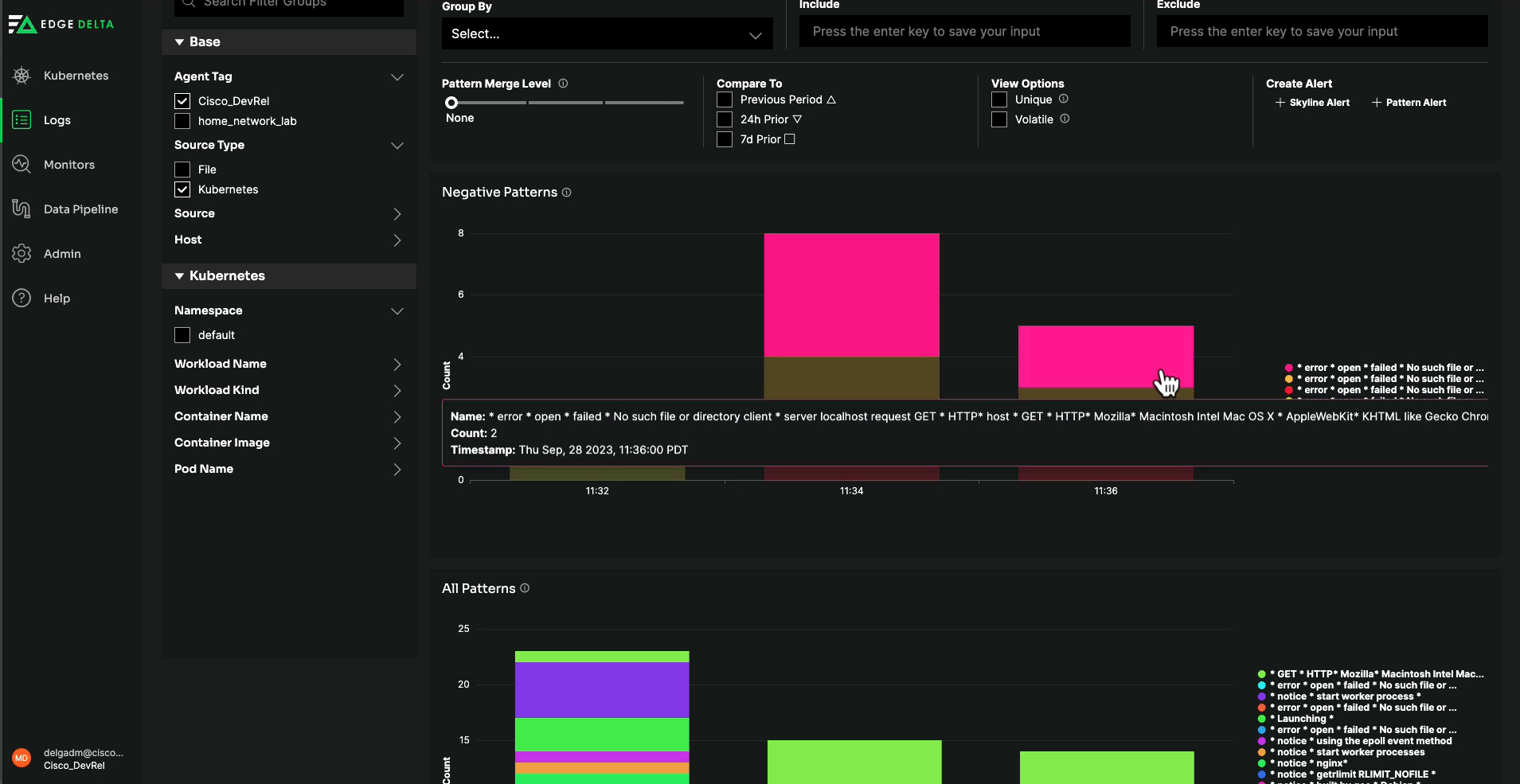

Among the many most helpful screens was “Patterns.” This characteristic clusters collectively repetitive loglines, so I can simply interpret every distinctive log message, perceive how incessantly it happens, and whether or not I ought to examine it additional.

Edge Delta’s Patterns characteristic makes it simple to interpret knowledge by clustering

Edge Delta’s Patterns characteristic makes it simple to interpret knowledge by clustering

collectively repetitive log messages and supplies analytics round every occasion.

Creating pipelines with Syslog knowledge

Second, I wished to control knowledge in flight utilizing Edge Delta observability pipelines. Right here, I put in the Edge Delta agent on my Mac OS. Then I exported Syslog knowledge from my Cisco ISR1100 to my Mac.

From inside the Edge Delta interface, I configured the agent to hear on the suitable TCP and UDP ports. Now, I can apply processor nodes to rework (and in any other case manipulate) my knowledge earlier than it hits my downstream analytics platform.

Particularly, I utilized the next processors:

- Masks node to obfuscate delicate knowledge. Right here, I changed social safety numbers in my log knowledge with the string ‘REDACTED’.

- Regex filter node which passes alongside or discards knowledge primarily based on the regex sample. For this instance, I wished to exclude DEBUG degree logs from downstream storage.

- Log to metric node for extracting metrics from my log knowledge. The metrics might be ingested downstream in lieu of uncooked knowledge to assist real-time monitoring use instances. I captured metrics to trace the speed of errors, exceptions, and destructive sentiment logs.

- Log to sample node which I alluded to within the part above. This creates “patterns” from my knowledge by grouping collectively comparable loglines for simpler interpretation and fewer noise.

By means of Edge Delta’s Pipelines interface, you possibly can apply processors

By means of Edge Delta’s Pipelines interface, you possibly can apply processors

to your knowledge and route it to completely different locations.

For now all of that is being routed to the Edge Delta backend. Nevertheless, Edge Delta is vendor-agnostic and I can route processed knowledge to completely different locations – like AppDynamics or Cisco Full-Stack Observability – in a matter of clicks.

Conclusion

In the event you’re all for studying extra about Edge Delta, you possibly can go to their web site (edgedelta.com). From right here, you possibly can deploy your individual agent and ingest as much as 10GB per day totally free. Additionally, take a look at our video on the YouTube DevNet channel to see the steps above in motion. Be at liberty to submit your questions on my configuration beneath.

Associated sources

Share: